In 1989, ransomware arrived by floppy disk. The ransom demand was $189. Today, the average payment exceeds $800,000, attack frequency has increased 70–80% year-over-year, and the criminal ecosystem behind it generates over $57 billion annually. That is not evolution. It is a different threat entirely, and AI is what changed it.

What began with the rudimentary AIDS Trojan has matured into a multi-billion-dollar criminal economy powered by sophisticated tooling, affiliate networks, and artificial intelligence. But raw dollar figures only capture part of the picture. The operational sophistication of these attacks has undergone a qualitative shift. This post examines the technical mechanics behind ransomware’s growth, the specific ways AI is amplifying threat actor effectiveness, and what the defensive landscape looks like in response.

Ransomware is Now an Industry

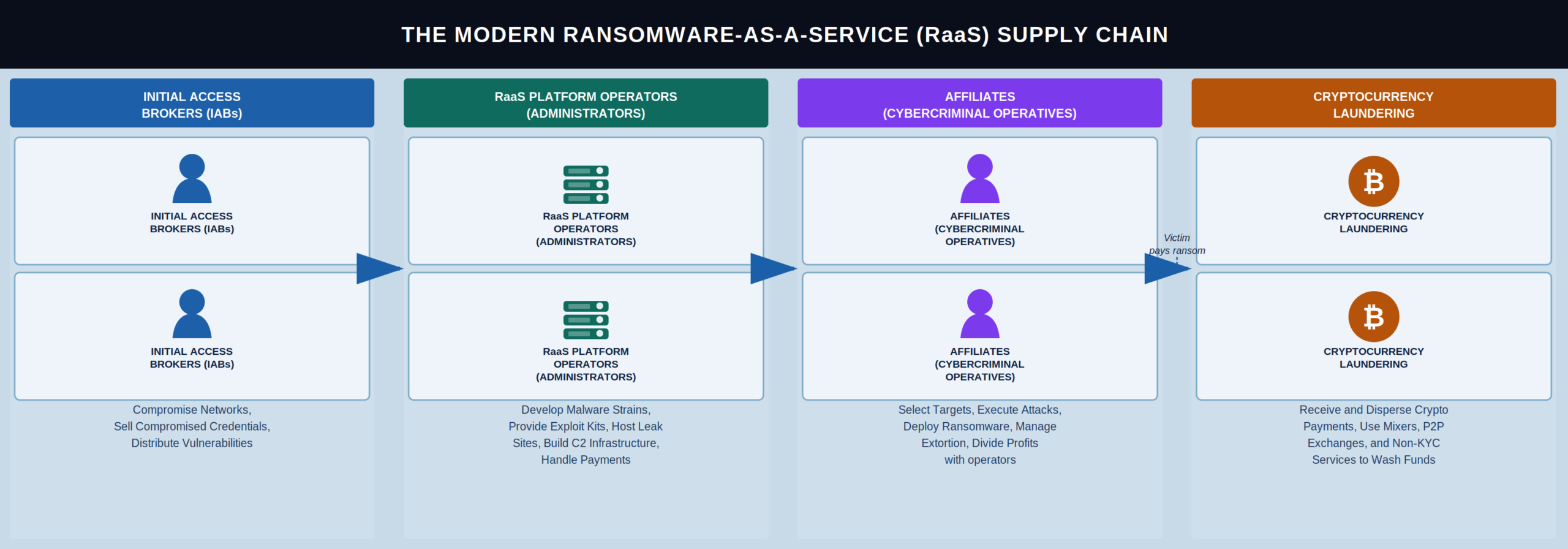

Today’s ransomware attacks are rarely the work of a lone actor. They operate within a layered criminal economy.

Ransomware-as-a-Service (RaaS) platforms like LockBit, BlackCat/ALPHV, and their successors have industrialized the attack pipeline. Core developers build and maintain the malware, while affiliates, often with limited technical skill, handle initial access, lateral movement, and deployment. Revenue splits typically fall around 70/30 or 80/20 in favor of the affiliate. This division of labor has lowered the skill floor and expanded the attack surface dramatically.

Initial Access Brokers (IABs) form another critical layer. These actors specialize in compromising networks and selling access, via stolen credentials, exploited VPN appliances, or webshell persistence, to ransomware affiliates on dark web marketplaces. A single compromised RDP endpoint might sell for $5,000–$50,000 depending on the target’s revenue and sector.

Double and triple extortion models have become standard. Attackers exfiltrate data before encryption, threatening public leaks on dedicated shame sites. Some groups additionally DDoS the victim or contact their customers and partners directly, compounding pressure to pay.

The AI Inflection Point

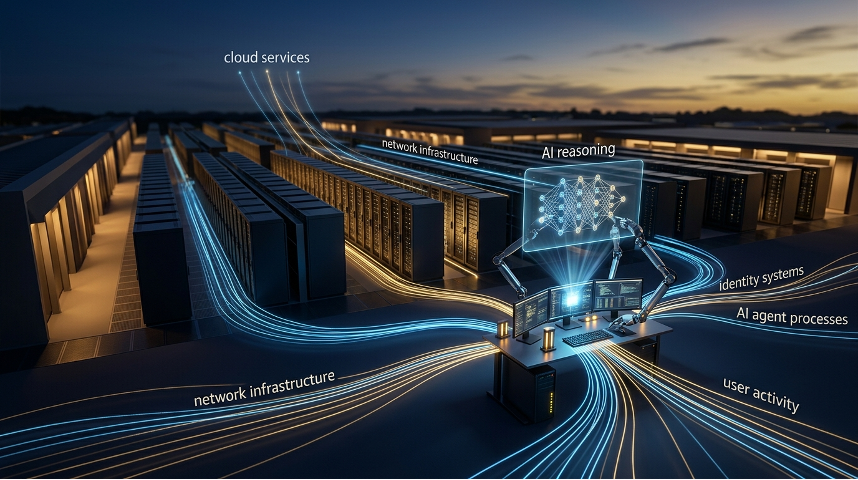

AI has changed this threat in two ways: it has amplified offensive capability and created new attack surfaces through AI-dependent infrastructure. Both deserve close technical scrutiny.

1. AI-Enhanced Social Engineering

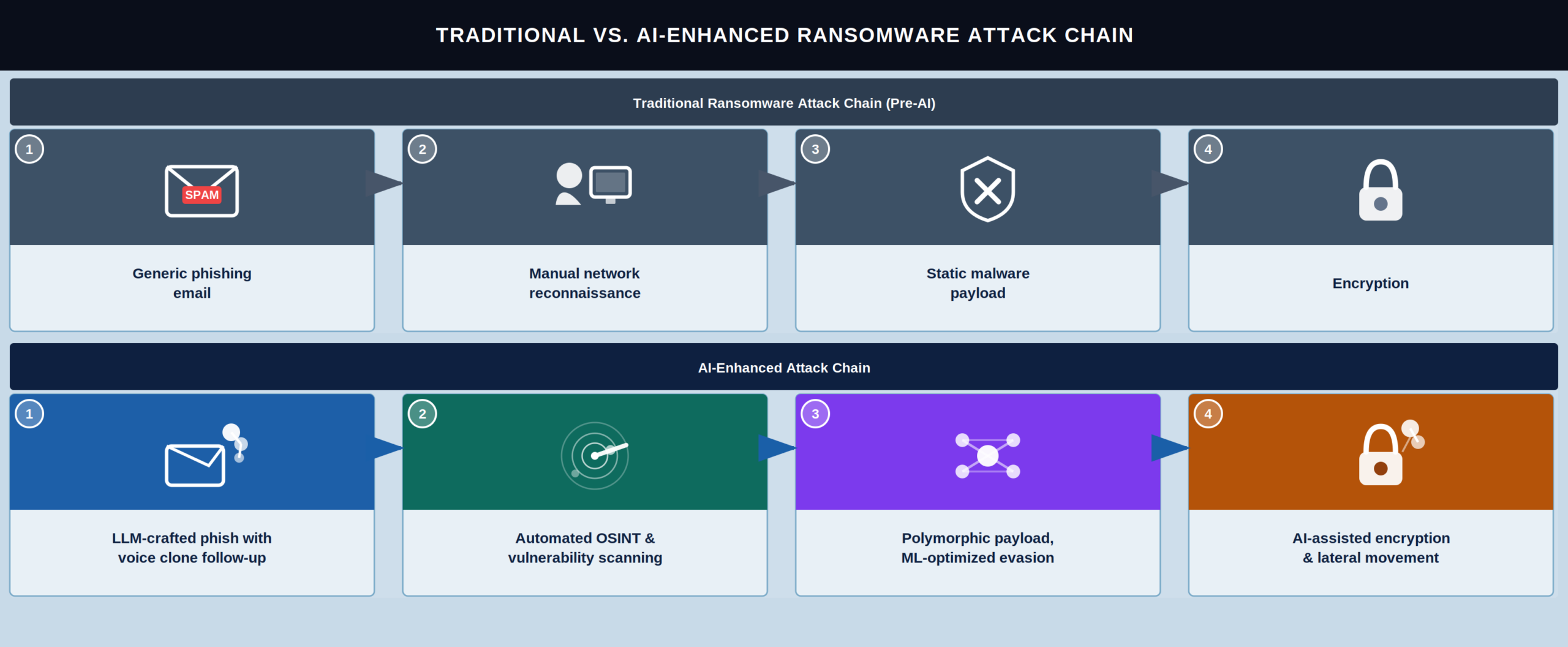

Phishing remains the dominant initial access vector for ransomware, accounting for roughly 40–60% of successful intrusions depending on the study. AI has made phishing dramatically more effective along several axes.

Large language models eliminate linguistic tells. Before LLMs, phishing from non-English-speaking groups was often easy to spot. Grammatical errors, awkward phrasing, off-tone language. Those tells are gone. Modern LLMs produce fluent, context-aware prose in any target language and can be prompted to mimic specific corporate communication styles, matching the tone of a company’s internal memos or a particular executive’s writing patterns when provided with samples.

Voice cloning enables vishing at scale. AI-driven voice synthesis has enabled a new class of attacks. In several documented incidents, threat actors have used cloned executive voices in phone calls to authorize fraudulent wire transfers or extract credentials from IT helpdesks. Modern synthesis tools need only seconds of sample audio. Earnings calls, conference recordings, LinkedIn videos. The output is perceptually indistinguishable from the real speaker.

Automated reconnaissance and targeting. LLMs can rapidly parse and summarize public information about target organizations, SEC filings, LinkedIn profiles, press releases, GitHub repositories, to construct highly tailored spear-phishing campaigns. What previously required hours of manual OSINT work can now be accomplished in minutes, allowing attackers to scale personalized targeting across hundreds of organizations simultaneously.

2. AI-Augmented Malware Development

Beyond social engineering, AI tools are accelerating the malware development lifecycle itself.

Polymorphic code generation. Threat actors are leveraging LLMs to generate polymorphic malware variants, functionally equivalent code with different syntactic structures, variable names, and control flow patterns. This directly undermines signature-based detection. While traditional polymorphic engines have existed for decades, LLM-generated variants exhibit far greater diversity and can be produced on demand without deep reverse engineering expertise.

Vulnerability discovery and exploit development. AI-assisted fuzzing and code analysis tools have shortened the window between vulnerability discovery and weaponized exploit availability. Researchers have demonstrated that LLMs can identify certain classes of vulnerabilities in source code, buffer overflows, injection flaws, logic errors, and in some cases generate proof-of-concept exploit code. These capabilities are still imperfect. They are also improving fast, and the skill floor keeps dropping.

Evasion technique optimization. Machine learning models can be trained to test malware payloads against defensive tools and iteratively modify them to bypass detection. This creates an adversarial feedback loop: the attacker’s AI probes the defender’s AI, identifies detection boundaries, and adjusts the payload accordingly. Sandboxing evasion, EDR unhooking, and AMSI by

The Data Is Hard to Ignore

The data doesn’t prove causation. But the correlation is hard to ignore.

Attack frequency. The number of ransomware incidents reported to law enforcement and tracked by threat intelligence firms has increased roughly 70–80% year-over-year in 2024 and 2025, compared to a more typical 15–25% annual increase in prior years. This inflection correlates with the widespread availability of capable open-source and commercial LLMs beginning in 2023.

Time-to-compromise. The median dwell time, the period between initial access and ransomware deployment, has decreased significantly. Where attackers once spent weeks inside a network conducting manual reconnaissance, AI-assisted tooling has compressed this to days or even hours in some cases. Secureworks and CrowdStrike have both documented this trend in their annual threat reports.

Phishing efficacy. Click-through rates on phishing campaigns have risen as AI-generated content has become more convincing. A 2025 study from a major email security vendor found that AI-crafted phishing emails achieved click rates 2–3x higher than traditional template-based campaigns in controlled testing environments.

Ransom demands. Average ransom payments have continued to climb, reaching over $800,000 by mid-2025. This reflects both the increased severity of attacks and the improved targeting of high-value organizations.

How Defenders Are Responding (And Where They’re Falling Short)

Defenders have responded. AI is being deployed defensively as well, though the asymmetry between attack and defense persists.

Behavioral detection and anomaly modeling. Modern EDR and XDR platforms increasingly rely on machine learning models trained on telemetry data to detect anomalous behavior, unusual process trees, atypical file access patterns, suspicious lateral movement, rather than static signatures. These systems can flag ransomware precursor activity before encryption begins, but they require careful tuning to manage false positive rates in production environments.

LLM-powered threat intelligence. Security operations centers are using LLMs to accelerate alert triage, correlate indicators of compromise across disparate data sources, and generate human-readable summaries of complex attack chains. This does not prevent attacks directly but compresses the analyst response loop, which is critical when attacker dwell times are shrinking.

AI-driven email filtering. Email security gateways have incorporated transformer-based NLP models to detect AI-generated phishing content by analyzing semantic coherence, stylistic anomalies, and contextual plausibility rather than relying on known-bad sender lists or keyword matching alone.

Automated patch prioritization. With AI accelerating exploit development, defenders need to triage vulnerabilities faster. ML-based vulnerability scoring systems, going beyond static CVSS ratings, now incorporate exploit availability, threat actor interest, and asset exposure context to prioritize patching decisions dynamically.

Despite these advances, the defender’s challenge remains structurally harder. Attackers need to succeed once; defenders must succeed every time. AI amplifies both sides, but the offensive advantage in speed, scalability, and adaptability is significant.

The Next 24 Months

Several trends will shape the ransomware-AI intersection over the next 12–24 months.

Autonomous attack agents. The progression from AI-assisted tools to semi-autonomous attack agents is already underway. Research demonstrations have shown LLM-driven agents capable of performing multi-step penetration testing tasks, scanning, exploitation, privilege escalation, and data exfiltration, with minimal human guidance. The gap between research proof-of-concept and criminal deployment is narrowing.

AI model poisoning as an attack vector. As organizations deploy AI systems in security-critical roles, the models themselves become targets. Adversarial inputs designed to cause misclassification, such as making a detection model ignore ransomware behavior, represent a growing concern.

Regulatory and policy responses. Governments worldwide are grappling with how to regulate dual-use AI capabilities. The tension between open model access (which benefits defenders and researchers) and the risk of enabling attackers has no easy resolution and will remain a central policy debate.

Cryptographic resilience. On a longer horizon, advancements in post-quantum cryptography and hardware-rooted attestation may shift the defensive landscape, but these are years away from

Conclusion

AI has not changed what ransomware is. It has changed how fast it arrives, how convincingly it’s delivered, and how difficult it is to stop.

For security practitioners, the takeaway is operational urgency. Detection strategies must evolve beyond signatures toward behavioral and contextual analysis. Incident response playbooks must account for compressed timelines. Phishing defenses must adapt to content that no longer carries the telltale markers of human error. And organizations must invest in the fundamentals, network segmentation, offline backups, privilege management, and regular testing, that remain the most reliable bulwark even as the threat evolves.

The AI arms race in cybersecurity is not a future scenario. It is already here, and the ransomware ecosystem is one of its most active proving grounds.

Brad Potteiger is the Chief Technology Officer at Arms Cyber, where he leads the development of next-generation preemptive security and anti-ransomware technology. Arms Cyber’s patented Stealth Posture Management platform protects organizations across Windows, Linux, and MacOS by making critical data invisible and resilient to attackers.