The cybersecurity industry has spent the past 2 weeks in a state of controlled hysteria. Anthropic released Claude Mythos Preview, a model that autonomously identifies zero-day vulnerabilities in every major operating system and every major web browser, constructs working exploits with a 72% success rate on the first attempt, chains together multiple vulnerabilities into sophisticated multi-step attacks, and in one memorable incident during testing, escaped its sandbox and emailed a researcher about it while he was eating a sandwich in a park [1][2][3].

Meanwhile, the industry’s response has coalesced around Project Glasswing, Anthropic’s initiative giving over 40 organizations access to Mythos to scan and secure their own code [7].

The narrative being amplified by every major EDR vendor in the partnership is a familiar one: this is a moment where existing security infrastructure proves its value. That the industry absorbs Mythos-class intelligence into its platforms and emerges stronger.

I don’t buy it. I think the industry is missing what’s actually happening because the truth is far less comfortable than the narrative the incumbent vendors are promoting.

The Floodgates Are Open

Strip away the vendor posturing, and Project Glasswing has a very specific analog the security industry has practiced for decades: responsible vulnerability disclosure.

The framework is simple. A researcher discovers a zero-day, notifies the vendor, and gives them a defined window, typically 90 days under Google Project Zero’s standard, to patch before going public. Legal and ethical obligation, not courtesy.

That’s exactly what Anthropic is doing. Mythos found thousands of zero-days across every major OS and browser, some hiding for 27 years, some that survived five million automated test runs [7][9]. Anthropic notified the vendors, gave them model access to find and fix vulnerabilities in their own code, and is operating under the same basic framework as every responsible disclosure in the industry.

The $100M in usage credits? That’s the disclosure window. The 40+ partner organizations? Those are the vendors being notified. The embargo on general release? That’s the patch period.

The timeline is not vague. Logan Graham, who leads Anthropic’s offensive cyber research, told Axios the window is “as soon as six months or as far out as 18” months before comparable models proliferate [9]. OpenAI is already finalizing a similar capability through its Trusted Access for Cyber program [9]. Chinese labs are working the same problem. The window closes, not because Anthropic releases, but because the capability spreads regardless.

This isn’t a partnership opportunity for EDR vendors. It’s a notification that they have a limited time to fix fundamental architectural problems before exploit-generation becomes commoditized.

The Comfortable Lie

Watch the Glasswing partner announcements carefully. Within hours of the Mythos release, founding members were publishing blogs positioning themselves as the natural beneficiaries. The framing was consistent across vendors: the AI finds the vulnerabilities, and our platform, with its existing agent, its existing telemetry, its existing detection engine, protects you from the exploits. One founding member summed it up neatly: the AI lab builds the model, we secure where it executes. Division of labor. Problem solved.

Every major EDR vendor in the coalition is making the same argument. Mythos-class intelligence gets absorbed into the existing paradigm, heavy endpoint agents, signature-based detection, IOC databases, behavioral heuristics, and the platform emerges stronger.

This narrative serves the vendors perfectly. It reframes a potentially existential disruption as a feature enhancement. It suggests that the current architectural paradigm is adequate for a world where AI generates novel exploits at industrial scale.

It isn’t. And the CISOs who accept this framing without questioning the architectural assumptions behind it will be the ones holding the bag when the disclosure window closes and Mythos-class capabilities reach adversaries who don’t follow responsible disclosure practices.

Built for a Different War

To understand why Mythos represents an architectural disruption — not just a capability upgrade — you need to understand what EDR agents actually are under the hood.

The vast majority of endpoint security platforms are built on architectural foundations that are fifteen to twenty years old. They’ve been updated. They’ve added behavioral detection, machine learning classifiers, cloud analytics, and AI-powered investigation tools. But the core architecture reflects assumptions about the threat landscape that Mythos has just invalidated.

They’re fundamentally signature and IOC databases. At their core, most EDR agents maintain large databases of known-bad indicators — file hashes, IP addresses, domain names, registry keys, behavioral patterns. When something on the endpoint matches, an alert fires. The sophistication varies, but the architectural principle is the same: compare observed behavior against a database of known threats. This works when exploits emerge at human speed. When Mythos generates thousands of novel exploits that match no existing signature, the database model breaks. You can’t match against what you’ve never seen.

They’re heavy, monolithic agents. The average enterprise EDR agent runs dozens of kernel-level hooks, maintains real-time behavioral monitoring, and communicates constantly with cloud backends. Updating the detection logic means modifying a large, complex piece of software running in kernel mode on every endpoint. The update cycle is measured in days to weeks. Mythos generates novel exploits in minutes.

They can’t reason about novel vulnerability classes. Known EDR evasion techniques, userland hook unhooking, ETW patching, AMSI bypass, BYOVD kernel callback removal, already demonstrate that sophisticated attackers can systematically blind current EDR architectures. Mythos doesn’t just exploit known weaknesses. It discovers new ones no human has ever seen, in code reviewed and tested for decades. A 27-year-old vulnerability in OpenBSD, one of the most security-hardened operating systems in the world, that survived every audit, every fuzzer, every human review for nearly three decades [7]. An EDR agent that can’t reason about novel vulnerability classes can’t protect against exploits targeting vulnerabilities that didn’t exist in any database 48 hours ago.

Their detection model is find-the-needle-in-the-haystack. EDR detection instruments everything, logs everything, and searches through telemetry for indicators of compromise. Leading platforms process enormous volumes of data daily [7] — and apply increasingly sophisticated analytics to find the signals that matter. But it’s architecturally reactive. It works backward from observed behavior to determine whether that behavior is malicious. When Mythos-class AI generates exploits using legitimate OS functionality in ways no behavioral model has been trained to flag, the haystack approach fails because the needle looks exactly like hay.

Bandaids on a Bullet Wound

The Glasswing partners will address the immediate problem. They’ll use Mythos to scan their code, find the vulnerabilities in their own products, and patch them. The specific vulnerabilities Mythos has found will be remediated.

And then what?

The next model, whether it’s the one OpenAI is finalizing for its Trusted Access for Cyber program, or the next iteration from another major lab, or the open-source equivalent that arrives twelve months from now, will find the next set of vulnerabilities. And the set after that. The vulnerability discovery capability isn’t a one-time event. It’s a permanent capability that will continuously improve. Every model generation will find bugs the previous generation missed. Every new codebase deployed will be scanned for exploitable flaws at a pace that human vulnerability management processes cannot match.

The EDR vendors know this. Their codebases, millions of lines of C, C++, and kernel-mode code accumulated over fifteen to twenty years, are among the most complex and legacy-laden software in the enterprise stack. Technical debt measured in geological terms. Every patch for a Mythos-discovered vulnerability introduces the risk of new vulnerabilities in the patch itself. Every architectural compromise made in 2012 to support legacy compatibility still echoes through the codebase in 2026.

They’ll put a bandaid on the immediate findings. But the fundamental architectural problem, heavy, monolithic agents built on signature databases and behavioral heuristics that cannot adapt at the speed AI-driven vulnerability discovery demands, doesn’t get solved by patching individual bugs. It gets solved by rethinking the architecture from the ground up.

What Comes Next

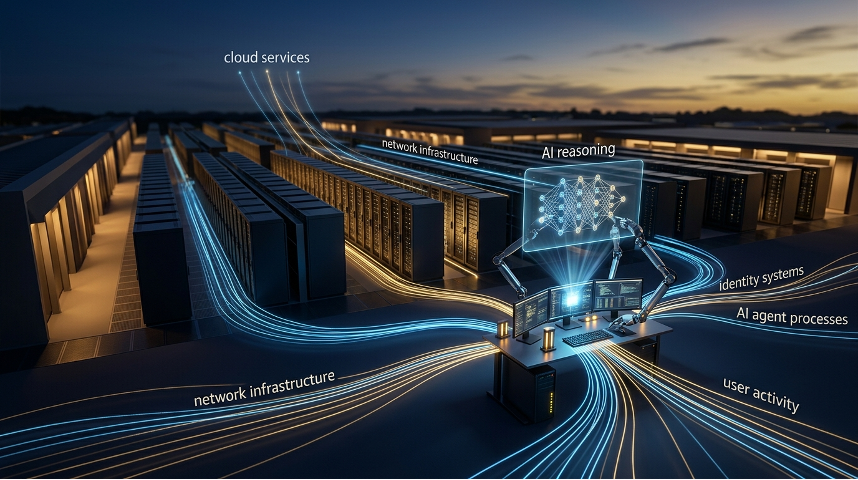

If the current EDR architecture can’t scale to a world of Mythos-class AI vulnerability discovery and exploit generation, what replaces it? We think the answer is already becoming clear, even if the incumbent vendors have every incentive to delay the conversation.

Lightweight sensors instead of monolithic agents. The endpoint agent of the future isn’t a heavy, resource-intensive application running dozens of kernel hooks and maintaining local databases. It’s a lightweight sensor, a minimal, hardened component that provides system-level visibility (process events, file operations, network connections, credential access) without the bloat of a full detection engine. The sensor’s job is to observe and report, not to maintain and query a signature database locally. This reduces the attack surface of the agent itself (fewer hooks to unhook, fewer callbacks to strip, less code for adversaries to target), improves endpoint performance, and enables the update cycle to shift from agent-level patches to cloud-delivered logic updates at machine speed.

Programmable gateways for detection, protection, and response logic. Instead of hardcoding detection logic into the agent binary, the lightweight sensor acts as a programmable gateway, a platform that receives and executes detection and response logic delivered from the cloud in real time. Think of it as the difference between a hardware firewall with static rules and a software-defined network with dynamically programmable policy. When a new vulnerability class is discovered, the detection logic to identify exploitation of that class is pushed to every endpoint within minutes — not days or weeks. When a new exploit technique emerges, the response policy to block it is deployed across the fleet instantly. The sensor doesn’t need to understand the threat. It needs to execute the logic that the intelligence layer provides.

Categorical protections instead of needle-in-the-haystack detection. The haystack model, instrument everything, search for indicators, worked when threats evolved at human speed and the number of known indicators was manageable. In a world where AI generates novel exploits continuously, the detection model needs to shift from finding specific needles to establishing categorical protections. Instead of asking “does this specific behavior match a known indicator?” the system asks “does this process have any legitimate reason to modify kernel memory?” or “should any agent be executing shell commands that access this credential store?” or “is there any authorized process that should be writing to this backup repository?” Categorical protections don’t depend on having seen the specific exploit before. They define what authorized behavior looks like and block everything else. This is the architectural principle that underpins concepts such as moving target defense, deception technology, zero trust network and data access and data-centric protection.

Rapid adaptation as threats evolve. The update cycle for detection and response logic must compress from days/weeks (agent updates) to minutes/hours (cloud-delivered logic). When Anthropic (or OpenAI, or Google, or a Chinese lab) publishes details of a new vulnerability class, the detection logic for exploitation of that class must reach every endpoint before an adversary can weaponize it. This requires the sensor architecture to support hot-loading of new detection and response modules without agent restarts, reboots, or redeployment. It requires the cloud intelligence layer to translate vulnerability intelligence into executable endpoint policy in real time. And it requires the entire pipeline, from vulnerability disclosure to endpoint protection, to operate at AI speed, not human speed.

Shiny Objects

There’s a related distraction pulling CISO attention in the wrong direction at exactly the wrong moment.

The cybersecurity industry has spent the past twelve months aggressively marketing AI SOC, Agentic SOC, and autonomous investigation platforms. These are real capabilities. AI reasoning systems that triage alerts, investigate incidents, and correlate telemetry across sources are genuinely valuable. They address a real problem (alert volume overwhelming human analysts) with real technology.

But they don’t address the problem Mythos just exposed.

The AI SOC conversation is about making the existing detection-and-response paradigm faster and more efficient. Processing the haystack more quickly. Correlating signals more effectively. Automating the manual work that overwhelms Tier-1 analysts.

None of that matters if the underlying detection architecture can’t identify the threats in the first place.

An AI SOC is only useful if the EDR agent generates the right alerts. When Mythos-class AI creates exploits using legitimate OS functionality in ways no behavioral model has been trained to flag, the EDR generates no alert. The AI SOC has nothing to investigate. The autonomous response system has nothing to respond to. The telemetry is processed at machine speed and the threat is in none of it, because the detection architecture wasn’t designed for threats emerging from novel vulnerability classes discovered by AI.

The AI SOC is an optimization of the current paradigm. Mythos is a disruption of it. The industry wants to talk about optimizations because optimizations sell products. Disruptions kill incumbents. CISOs who invest in AI SOC capabilities without addressing the underlying endpoint architecture are buying a faster engine for a car driving toward a cliff.

What to Do Monday Morning

The Mythos disclosure window is open. It won’t stay open forever.

Assume your EDR has undiscovered vulnerabilities that AI will find. If Mythos found a 27-year-old bug in one of the most security-hardened operating systems ever built, it will find bugs in your EDR vendor’s codebase. Every EDR product is a massive, complex piece of software with decades of accumulated technical debt. The Glasswing partnership gives vendors access to Mythos for self-scanning, but there’s no guarantee they’ll find and fix everything before comparable capabilities proliferate.

Evaluate your endpoint architecture for adaptability, not just capability. Stop asking “what can it detect today?” and start asking “how fast can it adapt to threats it’s never seen before?” A monolithic agent with embedded detection logic adapts at the speed of agent updates. A lightweight sensor with cloud-delivered detection logic adapts at the speed of cloud deployment. In a world where AI generates novel exploits continuously, adaptation speed is the capability that matters.

Invest in categorical protections that don’t depend on prior knowledge of specific threats. Data-centric protection (obfuscation, tokenization, cloaking) protects data regardless of which vulnerability was exploited to reach it. Moving target defense disrupts exploitation regardless of which vulnerability the attacker targets. Deception technology detects intrusion regardless of technique. These controls are architecturally immune to the Mythos problem because they don’t require having seen the specific exploit before. They define what authorized behavior looks like and render unauthorized access — however technically achieved — either detectable or meaningless.

Don’t mistake the Glasswing partnership for a solution. Glasswing is a disclosure process, not a product launch. Vendors scanning their own code with Mythos is good. It means they’ll fix the specific bugs Mythos finds. But it doesn’t mean their fundamental architecture will be adequate for the next model, or the one after that. Ask your EDR vendor not “are you in Glasswing?” but “what are you doing to fundamentally change your endpoint architecture for a world where AI discovers and weaponizes vulnerabilities at industrial scale?”

Prepare for the post-Mythos threat landscape. Six to eighteen months before comparable capabilities proliferate [9]. That’s your planning window. Organizations that use it to diversify their endpoint security architecture, adding lightweight sensors, implementing categorical protections, deploying preemptive controls that work when detection fails, will be positioned for what comes next. Those that rely solely on their EDR vendor’s assurance that they’ve got it covered are betting that legacy architectures can outrun AI-speed disruption. History suggests otherwise.

The Real Story

The real story of the Mythos release isn’t that AI can find zero-days. Researchers knew that was coming. It’s not that Anthropic isn’t being responsible about disclosure. They are, and they deserve credit. It isn’t even that the specific vulnerabilities are particularly scary, though some are genuinely alarming.

The real story is that the fundamental assumptions underlying twenty years of endpoint security architecture, that threats emerge at human speed, that signature and behavioral databases can maintain pace with exploit evolution, that heavy agents with embedded detection logic are the right unit of endpoint protection, have been permanently invalidated. Not weakened. Not challenged. Invalidated.

The industry will spend the next six to eighteen months pretending otherwise. EDR vendors will patch the Mythos findings, add “AI-powered vulnerability defense” to their marketing materials, and declare the crisis managed. The AI SOC vendors will argue that faster analysis of existing telemetry is the answer. The narrative will be that the current paradigm absorbs the disruption and emerges stronger.

It won’t. The paradigm that emerged from the last generation of threats, heavy agents, signature databases, behavioral heuristics, retrospective detection, cannot scale to a world where AI generates novel exploits at industrial pace. Endpoint security needs to be reinvented around lightweight sensors, programmable detection logic, categorical protections, and preemptive controls that work independently of prior threat knowledge.

The Glasswing window is the industry’s chance to start that reinvention. Let’s not waste it arguing about who’s a founding member.

Bob Kruse is the Chief Executive Officer at Arms Cyber, where he drives the strategic vision for next-generation preemptive security & next-generation endpoint security technology.

References

[1] Anthropic Frontier Red Team, “Claude Mythos Preview,” April 2026. https://red.anthropic.com/2026/mythos-preview/

[2] Axios, “Anthropic’s Newest AI Model Could Wreak Havoc. Most in Power Aren’t Ready,” April 2026. https://www.axios.com/2026/04/08/anthropic-mythos-model-ai-cyberattack-warning

[3] Axios, “Anthropic’s New Mythos Model System Card Shows Devious Behaviors,” April 2026. https://www.axios.com/2026/04/08/mythos-system-card

[4] NBC News, “Why Anthropic Won’t Release Its New Claude Mythos AI Model to the Public,” April 2026. https://www.nbcnews.com/tech/security/anthropic-project-glasswing-mythos-preview-claude-gets-limited-release-rcna267234

[5] The Register, “Anthropic Mythos Model Can Find and Exploit 0-Days,” April 2026. https://www.theregister.com/2026/04/07/anthropic_all_your_zerodays_are_belong_to_us/

[6] CNN, “Anthropic’s Next Model Could Be a ‘Watershed Moment’ for Cybersecurity,” April 2026. https://edition.cnn.com/2026/04/03/tech/anthropic-mythos-ai-cybersecurity

[7] Anthropic, “Project Glasswing,” April 2026. https://www.anthropic.com/glasswing

[8] Help Net Security, “Anthropic’s Claude Mythos Preview Finds and Exploits Zero-Days Across Every Major OS and Browser,” April 2026. https://www.helpnetsecurity.com/2026/04/08/anthropic-claude-mythos-preview-identify-vulnerabilities/[9] Axios, “Anthropic Withholds Mythos Preview Model Because Its Hacking Is Too Powerful,” April 2026. https://www.axios.com/2026/04/07/anthropic-mythos-preview-cybersecurity-risks