Six months ago, the AI security conversation was dominated by prompt injection, model alignment, and supply chain integrity. Important problems, all of them. But they shared a common assumption: that the threat lived upstream. In the model, in the training data, in the API layer. And that if you could secure the pipeline, you could secure the outcome.

Then January 2026 happened.

A single open-source AI agent called Clawdbot went viral over one weekend, accumulated over 135,000 GitHub stars [1], and within 72 hours had exposed authentication tokens, leaked API keys, stored credentials in plaintext, and created what multiple security firms have called the biggest shadow AI incident of the year [2]. Researchers found over 21,000 publicly exposed instances [3]. Koi Security identified more than 820 malicious skills in its marketplace, up from 324 just weeks earlier [4]. Trend Micro documented threat actors using dozens of those skills to distribute the Atomic macOS infostealer [4]. Palo Alto Networks [5], Cisco [6], Kaspersky [7], Guardz [8], Tenable [9], and Oasis Security [4] all published independent analyses within days. The project has since been renamed twice, first to Moltbot, then to OpenClaw, and has been patched for multiple critical vulnerabilities including a one-click remote code execution flaw (CVE-2026-25253, CVSS 8.8) that could compromise the entire gateway through a single malicious link [4][7].

We’ve been watching this space closely, and what we’ve seen over the past six months has fundamentally changed how we think about AI security. The lesson from OpenClaw isn’t that one product was poorly secured. It’s that the entire industry’s approach to AI governance has a structural blind spot: the endpoint where AI agents actually execute is largely unmonitored, unenforced, and ungoverned.

This piece is our attempt to articulate what we’ve seen, what we think it means, and where we believe the industry needs to go.

What we got right — and what we missed

When we look at how the AI security landscape was framed even six months ago, the conversation was heavily weighted toward the model layer. Prompt injection defense, guardrails on model outputs, alignment research, red-teaming exercises against LLM APIs. OWASP’s LLM Top 10 focused primarily on risks inherent to language models themselves, training data poisoning, insecure output handling, model denial of service [10]. The security controls being built reflected that framing: input sanitization, output filtering, API gateway monitoring, DLP integration at the prompt level.

That work was and remains important. But it was built on an implicit assumption: that the model is the execution environment. That the dangerous thing is what the model says or generates. The AI agent revolution flipped that assumption. The dangerous thing is expanding beyond just what the model says. It’s also what the agent does. An agent doesn’t just generate text. It reads files, executes shell commands, sends emails, browses the web, manages credentials, modifies databases, and takes actions across the full scope of the user’s digital life. The execution environment isn’t the model. It’s the endpoint.

From the business side, what struck us was how fast the gap between adoption and governance opened. We’d been tracking Gartner’s predictions on agentic AI. 40% of enterprise applications featuring task-specific AI agents by end of 2026, up from less than 5% in 2025 [11]. But what the Clawdbot incident demonstrated was that adoption doesn’t wait for enterprise deployment cycles. A developer installs an open-source AI agent on a Friday night, connects it to their work email and Slack, and by Monday morning you have an unmanaged autonomous system with shell access and persistent memory operating inside your corporate environment, connected to production data, and completely invisible to your security team. Guardz described it perfectly: a “Remote Access Trojan with a personality” [8].

The Gravitee State of AI Agent Security 2026 report confirmed what we suspected: 81% of teams are past the planning phase into active testing or production, but only 14.4% have full security/IT approval for their agent deployments [12]. On average, only 47% of an organization’s AI agents are actively monitored [12]. And 88% of organizations reported confirmed or suspected AI agent security incidents in the past year [12]. The governance gap isn’t narrowing. It’s accelerating.

The three layers of AI governance — and the one that’s missing

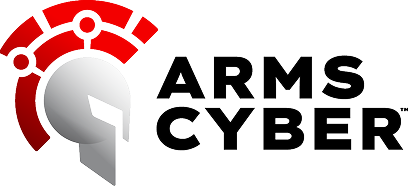

When we map out how the industry is currently approaching AI governance, we see three layers getting significant attention, and a fourth that’s almost entirely neglected.

Layer 1: Model governance. This is where the industry started. Guardrails on model behavior, alignment tuning, safety filters on inputs and outputs, red-teaming against adversarial prompts. The major model providers (Anthropic, OpenAI, Google) invest heavily here, and for good reason. This layer ensures the model itself doesn’t generate harmful content, leak training data, or respond to prompt injection in ways that bypass safety constraints. It’s mature, well-resourced, and increasingly effective.

Layer 2: Supply chain governance. This layer addresses the integrity of the AI ecosystem — vetting model providers, auditing training data, securing the API pipeline, monitoring for model drift, managing dependencies. The OWASP Top 10 for Agentic Applications (released December 2025) [13] and MITRE ATLAS both map significant risks here, including compromised component integration, insecure plugin/extension supply chains, and cascading failures in multi-agent systems. The explosion of malicious MCP (Model Context Protocol) servers and poisoned skills in marketplaces like ClawHub has made this layer urgently relevant [4][6].

Layer 3: Prompt and interaction governance. This is the AI gateway model, monitoring what users send to AI systems, applying DLP policies to prevent sensitive data from entering prompts, logging AI interactions for compliance and audit. Products in this space inspect the conversation between human and model, flagging policy violations, blocking exfiltration attempts embedded in prompts, and providing visibility into shadow AI usage. Cisco AI Defense [14], Check Point’s GenAI Protect [14], and the recently acquired Acuvity platform (now part of Proofpoint) [15] all operate primarily at this layer.

Layer 4: Endpoint execution governance. This is the blind spot. When an AI agent runs on an endpoint, executing shell commands, reading files, writing to databases, managing OAuth tokens, sending emails, browsing the web, the actions it takes are endpoint actions. They happen at the operating system level. They use the user’s credentials and permissions. They interact with the file system, the network stack, the process scheduler, the registry. And in the vast majority of deployments today, no security tool is monitoring, governing, or enforcing policy on those actions as AI agent actions.

Tools like EDR see the processes. It sees the network connections. It sees the file operations. But it doesn’t know, and has no way of knowing, that those actions were initiated by an autonomous AI agent operating with delegated authority, potentially under the influence of a poisoned MCP tool description, a hijacked goal, or a compromised skill from an unvetted marketplace. The telemetry exists, but the context is missing.

OpenClaw: a case study in endpoint-level AI risk

To understand why endpoint execution governance matters, it helps to walk through what actually happened with OpenClaw in concrete terms — not just the vulnerabilities that were disclosed, but the architectural decisions that made those vulnerabilities so impactful.

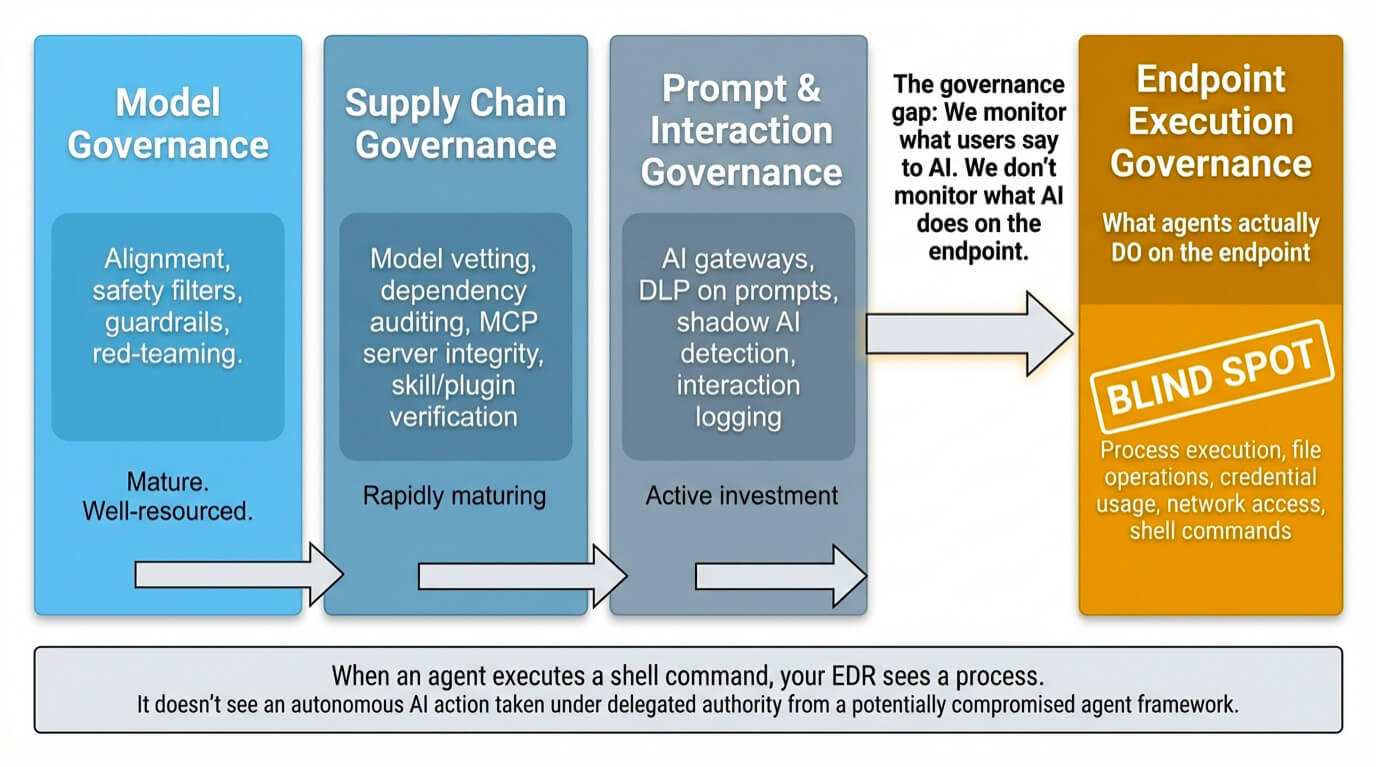

OpenClaw (the current name; previously Clawdbot, then Moltbot) is an open-source AI agent that runs locally on the user’s machine [5]. It integrates with messaging apps, calendars, developer tools, email, file systems, and web browsers. It can execute shell commands, read and write files, manage credentials, and take autonomous actions across the user’s digital environment [1][5]. It connects to various LLMs (Claude, GPT, local models via Ollama) and supports a plugin ecosystem called “skills” distributed through ClawHub and SkillsMP [4]. The core architectural decision, running locally with the user’s full system privileges, is what makes it both powerful and dangerous.

Here’s what the security community found:

Authentication failures at the gateway. OpenClaw’s gateway assumed that any connection from localhost was trusted [9]. When deployed behind a reverse proxy, external connections appeared to originate from localhost, bypassing authentication entirely [2]. Censys identified over 21,000 exposed instances reachable from the public internet [3].

Credential storage in plaintext. API keys, OAuth tokens, user profiles, and conversation memories were stored in plaintext Markdown and JSON files on the local filesystem [9]. Any attacker who gained access to the endpoint, through the authentication bypass, a malicious skill, or any other vector, could harvest credentials for every service the agent was connected to [1][9].

Malicious skills as a supply chain attack. The ClawHub marketplace grew explosively, and so did the malicious content within it. Koi Security found over 820 malicious skills in ClawHub, up from 324 [4]. Trend Micro documented 39 malicious skills across ClawHub and SkillsMP being used to distribute infostealers [4]. Cisco’s AI Threat Research team ran a known-vulnerable third-party skill against OpenClaw and concluded that the platform fails decisively at preventing malicious skill execution [6]. In a separate analysis, Cisco found that 26% of over 31,000 agent skills analyzed contained at least one vulnerability [6].

Persistent memory as an attack surface. Palo Alto Networks highlighted a characteristic that makes OpenClaw fundamentally different from a traditional tool: persistent memory [5]. The agent remembers context from previous interactions across sessions. If an attacker poisons that memory, through a malicious skill, a crafted message, or a compromised data source, the corruption persists and influences all future agent behavior without any visible sign of compromise [5][16].

From a technical perspective, what’s critical to understand is that every one of these risks manifests as endpoint behavior. The malicious skill executes shell commands on the endpoint. The credential theft reads files from the endpoint filesystem. The authentication bypass grants access to a service running on the endpoint. The memory poisoning modifies data stored on the endpoint. If you’re monitoring the model API for prompt injection, you don’t see any of this. If you’re scanning the supply chain for known-bad dependencies, you might catch some of it, but only before installation, not during execution. If you’re running an AI gateway that inspects prompts between user and model, you miss the actions entirely because the agent’s execution happens downstream of the model interaction.

The only place where all of these risks become visible is at the endpoint. And the only tool that has existing visibility into endpoint behavior, process creation, file operations, network connections, credential access, shell command execution, is the endpoint security agent that’s already deployed: the EDR.

Why current AI governance tools aren’t enough alone

The AI governance market is evolving rapidly, and there are excellent tools addressing specific parts of the problem. But most of them operate at layers at the network, API, and prompt layers, and they face a fundamental architectural limitation when it comes to what agents do on the endpoint.

AI gateways and prompt monitors inspect the traffic between users and AI services. They can detect sensitive data in prompts, enforce DLP policies, and log interactions [14][15]. But when an AI agent takes an autonomous action on the endpoint, reading a file, executing a command, sending an email, that action doesn’t pass through the gateway. The gateway sees the user’s prompt and the model’s response. It doesn’t see the agent’s subsequent execution of that response.

MCP security tools address the integrity of the tooling ecosystem, vetting MCP servers, scanning tool descriptions for poisoning, monitoring for malicious modifications. This is critical work, especially given the documented attacks: Invariant Labs demonstrated that a single malicious MCP server could exfiltrate an entire WhatsApp conversation history by embedding hidden instructions in tool descriptions [17][18]. But MCP security operates at the integration layer, before execution. Once the agent decides to execute an action, MCP security has handed off. The enforcement gap is at runtime, on the endpoint.

Supply chain scanners like Cisco’s Skill Scanner can analyze skills and plugins for threats, identifying vulnerable or malicious components before they’re installed [6]. But supply chain scanning is inherently pre-deployment. A skill that passes inspection at installation can be updated to include malicious behavior later, can behave differently based on environmental conditions, or can be exploited through prompt injection at runtime in ways that static analysis doesn’t capture.

What we keep coming back to in conversations with CISOs is the analogy to where we were with SaaS security ten years ago. Shadow IT was the problem then, users adopting cloud applications without security team visibility or approval. The industry responded with CASBs, which provided visibility and control at the network layer. But CASBs had a gap: they couldn’t see what happened inside the application after the connection was established. The industry eventually solved that with API-level integrations and endpoint-level agents.

We’re at the same inflection point with AI agents. Shadow AI is the new shadow IT, the Gravitee report found that only 14.4% of organizations have full security approval for their agent fleet [12]. The tools being built now are analogous to early CASBs: they provide visibility at the gateway level, at the supply chain level, at the prompt level. What’s missing is the endpoint-level enforcement that makes governance real at the point of execution.

What endpoint-integrated AI governance actually looks like

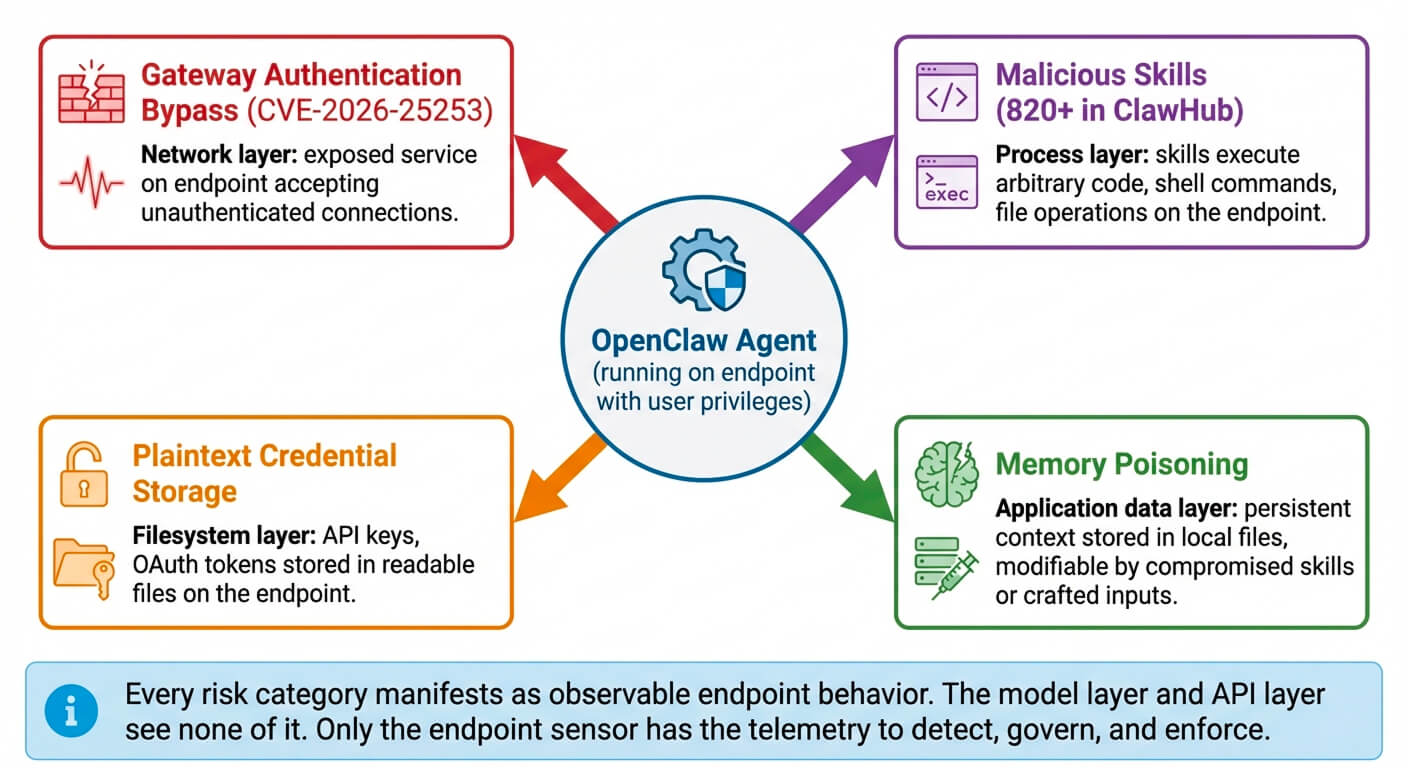

The thesis is straightforward: AI governance must extend to the endpoint because that’s where AI agents act. The endpoint sensor, whether it’s an EDR agent, a dedicated agentic endpoint security agent, or an integrated capability that combines both, is the only component with the system-level visibility required to observe, evaluate, and enforce policy on what AI agents actually do.

Here’s what that integration needs to provide:

AI agent discovery and inventory. Before you can govern AI agents, you need to know they exist. Endpoint sensors need the ability to identify AI agent processes, not just known agents like OpenClaw or Ollama, but any software that exhibits agentic behavior: making LLM API calls, executing tool actions, maintaining persistent state, operating MCP servers. This is the foundational visibility layer. Palo Alto Networks described this requirement clearly when they announced their intent to acquire Koi, a company they described as pioneering “Agentic Endpoint Security” – the ability to see all AI software, understand its risk level, and control the AI ecosystem in real time [19]. The fact that Palo Alto is calling agentic endpoint security “a new category of protection” that will “soon become a standard requirement for enterprise security” tells you where the market is headed [19].

Runtime behavioral monitoring of agent actions. Once agents are identified, the endpoint sensor must monitor their actions with AI-specific context. When an agent executes a shell command, the sensor should know it was an AI-initiated action, which agent framework initiated it, what skill or tool triggered it, and what authority chain authorized it. This is fundamentally different from generic process monitoring. An EDR that sees “bash executed a curl command” has some telemetry. An AI-governance-integrated endpoint sensor that sees “OpenClaw agent, running skill ‘DataSync v2.1’ from ClawHub, executed a curl command to exfiltrate the contents of ~/.ssh/id_rsa to an external IP, triggered by a tool description containing hidden instructions” has actionable intelligence.

Policy enforcement at the execution layer. Visibility without enforcement is monitoring, not governance. The endpoint sensor must be able to block agent actions that violate policy, preventing unauthorized file access, blocking network connections to unapproved destinations, stopping shell command execution that exceeds the agent’s authorized scope, and enforcing least privilege at the system call level. This is where the OWASP Top 10 for Agentic Applications’ concept of “least agency” becomes critical [13][20]: the principle that agents should only have the minimum autonomy required for their task must be enforced somewhere, and that somewhere is the endpoint.

Credential and identity governance. The State of AI Agent Security 2026 report found that only 22% of organizations treat AI agents as independent identity-bearing entities, while 46% still rely on shared API keys [12]. At the endpoint level, this means agents are operating with the user’s full credentials and permissions. Endpoint-integrated AI governance must enforce credential isolation, ensuring that agent-accessible credentials are scoped, rotated, and auditable independently of the user’s broader credential set. When an agent accesses an OAuth token stored on the filesystem, the endpoint sensor should evaluate whether that access is within the agent’s authorized scope, not just whether the user has permission.

MCP and skill integrity verification at runtime. Supply chain scanning at installation is necessary but insufficient. The endpoint sensor should continuously verify the integrity of MCP servers, skills, and tool descriptions at runtime, detecting modifications to tool metadata that could indicate poisoning [17], flagging MCP servers that request permissions beyond their declared scope [21], and alerting on behavioral changes in skills that diverge from their baseline. This closes the gap between static supply chain analysis and runtime execution behavior.

The MCP problem: when the connection layer becomes the attack surface

One area where endpoint governance is especially critical is the Model Context Protocol ecosystem. MCP has rapidly become the standard for connecting AI models to external tools and data sources, what some researchers have started calling “the TCP/IP of the agentic AI era” [22]. But MCP’s security model has significant gaps that the industry is only beginning to address, and many of those gaps can only be covered at the endpoint.

Invariant Labs documented the first major class of MCP attack – Tool Poisoning – in early 2025, demonstrating that malicious instructions embedded in MCP tool descriptions are invisible to users but visible to the AI model [17]. A tool that appears to be a simple text formatter can contain hidden instructions that direct the model to exfiltrate SSH keys, read configuration files, or forward private messages to attacker-controlled destinations [17][18]. The attack works because MCP clients pass tool descriptions to the LLM as context, and the LLM follows those instructions alongside the user’s actual request [18].

The timeline of MCP security incidents since then has been sobering [23]. Researchers demonstrated WhatsApp message exfiltration through a poisoned MCP server [17][23]. The official GitHub MCP server was shown to be vulnerable to prompt injection that could exfiltrate private repository contents [23]. Anthropic’s own MCP Inspector developer tool was found to allow unauthenticated remote code execution [23]. JFrog disclosed a critical command injection bug in mcp-remote, a widely used OAuth proxy [23]. Palo Alto Networks’ Unit 42 demonstrated three novel attack vectors through MCP sampling: resource theft, conversation hijacking, and covert tool invocation [24].

Every one of these attacks ultimately executes on the endpoint. The poisoned tool description triggers an action on the local machine. The exfiltrated data is read from local files and sent over local network connections. The command injection runs in the local shell. MCP security solutions that inspect tool descriptions and enforce authentication at the protocol level address part of the problem, but the execution still happens at the OS level, using the endpoint’s resources, credentials, and network access. An endpoint sensor that monitors for anomalous file access patterns, unusual network destinations, and shell commands that don’t match the agent’s declared purpose provides a critical enforcement layer that protocol-level security alone cannot deliver.

Where we see this going

We see three trends converging over the next twelve months that will make endpoint-integrated AI governance a standard requirement rather than an emerging concept.

Trend 1: Regulatory pressure will force runtime enforcement. The EU AI Act’s high-risk system rules take effect in August 2026, and they require continuous monitoring and logging of AI system behavior, not just pre-deployment assessment [25]. Singapore’s Model AI Governance Framework for Agentic AI, released in early 2026, is the first national framework to address autonomous agent governance specifically [26]. These frameworks will require organizations to demonstrate that they can observe and control what AI agents do, not just what models generate, and that requires enforcement at the point of execution.

Trend 2: Security vendor convergence. The acquisitions tell the story. Proofpoint acquired Acuvity (AI security and governance across endpoints, browsers, and MCP infrastructure) in February 2026 [15]. Palo Alto Networks announced its intent to acquire Koi (agentic endpoint security) the same month, explicitly calling it a new required security category [19]. These are not niche acquisitions, they’re market signals that the major security platforms see endpoint-level AI governance as a fundamental capability gap that must be filled. We expect EDR vendors to follow with AI governance integration, either through acquisition or organic development, within the next year.

Trend 3: The OWASP Agentic Top 10 will drive operationalization. The OWASP Top 10 for Agentic Applications, released December 2025, provides the first widely recognized risk framework specifically for autonomous AI systems [13]. Its concepts, goal hijacking, tool misuse, identity and privilege abuse, memory poisoning, rogue agent behavior, map directly to endpoint-observable behaviors [13][20]. As organizations begin operationalizing this framework (and regulators begin referencing it), the need for endpoint-level detection and enforcement of these risks will become concrete. Microsoft, NVIDIA, and AWS have already begun referencing OWASP’s agentic threat models in their own security guidance [10], and the formation of the Agentic AI Foundation (AAIF) by OpenAI, Anthropic, and Block signals that the platform providers themselves recognize the governance gap [27].

From a technical perspective, we expect to see the emergence of a unified endpoint sensor that combines traditional EDR capabilities (process monitoring, behavioral analysis, threat detection) with AI governance capabilities (agent discovery, action monitoring, policy enforcement, credential governance). The telemetry requirements overlap significantly, both need process-level visibility, file system monitoring, network connection tracking, and credential access auditing. The difference is context: EDR interprets that telemetry through the lens of threat detection (is this process malicious?), while AI governance interprets it through the lens of policy enforcement (is this agent action authorized?). Combining both interpretations on a single sensor, with shared telemetry and coordinated enforcement, is the architectural direction that makes the most sense.

The worst long term outcome would be deploying a separate AI governance agent alongside the existing EDR agent alongside the existing DLP agent, creating three agents competing for endpoint resources and creating gaps between their respective visibility. The best outcome is integration — AI governance capabilities built into or tightly coupled with the endpoint security platform that’s already deployed, sharing telemetry, sharing enforcement mechanisms, and sharing the single-agent architecture that enterprises have been moving toward for the past decade.

What defenders should do now

Organizations don’t need to wait for the market to converge. There are concrete steps that security teams can take today to begin closing the endpoint AI governance gap:

Establish visibility. You can’t govern what you can’t see. Deploy discovery capabilities, even basic ones, to identify AI agents, MCP servers, Ollama instances, and other agentic software running on endpoints across your environment. If your EDR supports custom detection rules, build rules that detect the installation and execution of known AI agent frameworks. The OpenClaw/Clawdbot incident demonstrated that agents can appear in your environment overnight, installed by individual users without any centralized deployment or approval [1][8].

Define AI agent policies. Before you can enforce, you need to define what “authorized” looks like. Which AI agent frameworks are approved for your environment? What data sources can they access? What actions can they take? What credentials can they use? These policies should exist as written governance documents now, even if automated enforcement comes later. The OWASP Top 10 for Agentic Applications provides an excellent framework for identifying the risk categories that your policies need to address [13].

Extend existing controls. Your EDR, DLP, and identity platforms already have telemetry and enforcement capabilities that are relevant to AI agent governance. Application whitelisting can prevent unauthorized agent frameworks from executing. DLP policies can monitor for sensitive data in agent-accessible directories. Identity controls can enforce MFA on credential access by agent processes. Network segmentation can limit what endpoints running AI agents can reach. These are not AI-specific controls, but they reduce the blast radius of a compromised agent significantly.

Monitor for the signals that matter. Even without dedicated AI governance tooling, security teams can build detection logic around the behavioral patterns associated with agentic AI risk: processes making LLM API calls that also execute shell commands, unusual file access patterns in credential storage directories, network connections to MCP server endpoints, skill/plugin installation from unvetted sources, and anomalous patterns that suggest memory poisoning or goal hijacking (an agent suddenly accessing resources outside its normal operating scope).

And perhaps most importantly, start thinking about your endpoint security architecture as the foundation for AI governance, not as a separate concern. The same sensor that detects ransomware, watches for lateral movement, and monitors for credential theft is the sensor that will need to detect a compromised AI agent executing unauthorized commands, a poisoned MCP tool exfiltrating sensitive data, or a rogue skill installing persistent access. The telemetry is the same. The enforcement mechanisms are the same. The integration must follow.

The endpoint is where AI meets reality

The AI governance conversation has been heavily focused on the model, the supply chain, and the prompt. for understandable reasons. Those are the new and unfamiliar parts of the stack. But the part of the stack where AI actually affects the world – where agents read files, execute commands, access credentials, send messages, and modify data – is the endpoint. It’s familiar territory. We have decades of experience securing it. We have sensors deployed on it. We have enforcement mechanisms built for it.

What we haven’t done yet is connect those mechanisms to the AI governance problem. That connection is the next major evolution in enterprise security architecture. The organizations that make it first will be the ones who can adopt AI agents with confidence, knowing that their governance extends to the point where it matters most: where the agent acts.

The OpenClaw incident was a preview. The next one will be bigger, more targeted, and embedded inside an enterprise deployment rather than an open-source project. Whether you’re ready for it depends on whether your security architecture sees what AI agents do on the endpoint, or only what they say to the model.

Brad Potteiger is the CTO and Bob Kruse is the CEO at Arms Cyber. This is one in a series examining how preemptive security, endpoint resilience, and adaptive governance address the structural challenges in securing AI-driven enterprise environments.

References

[1] Reco, “OpenClaw Security Crisis: Detect AI Agent Risks,” February 2026. https://www.reco.ai/blog/openclaw-the-ai-agent-security-crisis-unfolding-right-now/

[2] DTS Solutions, “The ClawdBot Vulnerability: How a Hyped AI Agent Became a Security Liability,” January 2026. https://www.dts-solution.com/the-clawbot-vulnerability-how-a-hyped-ai-agent-became-a-security-liability/

[3] Bitsight, “OpenClaw Security: Risks of Exposed AI Agents Explained,” February 2026. https://www.bitsight.com/blog/openclaw-ai-security-risks-exposed-instances/

[4] Dark Reading, “Critical OpenClaw Vulnerability Exposes AI Agent Risks,” March 2026. https://www.darkreading.com/application-security/critical-openclaw-vulnerability-ai-agent-risks/

[5] Palo Alto Networks, “OpenClaw May Signal the Next AI Security Crisis,” February 2026. https://www.paloaltonetworks.com/blog/network-security/why-moltbot-may-signal-ai-crisis/

[6] Cisco, “Personal AI Agents like OpenClaw Are a Security Nightmare,” January 2026. https://blogs.cisco.com/ai/personal-ai-agents-like-openclaw-are-a-security-nightmare/

[7] Kaspersky, “Key OpenClaw Risks,” February 2026. https://www.kaspersky.com/blog/moltbot-enterprise-risk-management/55317/

[8] Guardz, “When AI Agents Go Wrong: ClawdBot’s Security Failures, Active Campaigns, and Defense Playbook,” January 2026. https://guardz.com/blog/when-ai-agents-go-wrong-clawdbots-security-failures-active-campaigns-and-defense-playbook/

[9] Tenable, “Clawdbot: How to Mitigate Agentic AI Security Vulnerabilities,” February 2026. https://www.tenable.com/blog/agentic-ai-security-how-to-mitigate-clawdbot-moltbot-openclaw-vulnerabilities/

[10] OWASP, “OWASP Top 10 for Agentic Applications 2026 — The Benchmark for Agentic Security in the Age of Autonomous AI,” December 2025. https://genai.owasp.org/2025/12/09/owasp-top-10-for-agentic-applications-the-benchmark-for-agentic-security-in-the-age-of-autonomous-ai/

[11] Acuvity, “The Clawdbot Dumpster Fire: 72 Hours That Exposed Everything Wrong With AI Security,” January 2026. https://acuvity.ai/the-clawdbot-dumpster-fire-72-hours-that-exposed-everything-wrong-with-ai-security/

[12] Gravitee, “State of AI Agent Security 2026 Report: When Adoption Outpaces Control,” January 2026. https://www.gravitee.io/blog/state-of-ai-agent-security-2026-report-when-adoption-outpaces-control/

[13] OWASP, “OWASP Top 10 for Agentic Applications for 2026,” December 2025. https://genai.owasp.org/resource/owasp-top-10-for-agentic-applications-for-2026/

[14] AI News, “Best AI Security Solutions 2026: Top Enterprise Platforms Compared,” March 2026. https://www.artificialintelligence-news.com/news/best-ai-security-solutions-2026-top-enterprise-platforms-compared/

[15] Proofpoint, “Proofpoint Acquires Acuvity to Deliver AI Security and Governance Across the Agentic Workspace,” February 2026. https://www.proofpoint.com/us/newsroom/press-releases/proofpoint-acquires-acuvity-deliver-ai-security-and-governance-across/

[16] Modulos, “OWASP Top 10 for Agentic AI: The Governance Gap,” February 2026. https://www.modulos.ai/blog/owasp-top-10-for-agentic-ai-the-governance-gap/

[17] Invariant Labs, “MCP Security Notification: Tool Poisoning Attacks,” April 2025. https://invariantlabs.ai/blog/mcp-security-notification-tool-poisoning-attacks/

[18] Simon Willison, “Model Context Protocol has prompt injection security problems,” April 2025. https://simonwillison.net/2025/Apr/9/mcp-prompt-injection/

[19] Palo Alto Networks, “Securing the Agentic Endpoint,” February 2026. https://www.paloaltonetworks.com/blog/2026/02/securing-the-agentic-endpoint/

[20] Aikido, “OWASP Top 10 for Agentic Applications (2026): Full Guide to AI Agent Security Risks,” December 2025. https://www.aikido.dev/blog/owasp-top-10-agentic-applications/

[21] Red Hat, “Model Context Protocol (MCP): Understanding Security Risks and Controls,” November 2025. https://www.redhat.com/en/blog/model-context-protocol-mcp-understanding-security-risks-and-controls/

[22] Adversa AI, “Top MCP Security Resources — February 2026,” February 2026. https://adversa.ai/blog/top-mcp-security-resources-february-2026/

[23] AuthZed, “A Timeline of Model Context Protocol (MCP) Security Breaches.” https://authzed.com/blog/timeline-mcp-breaches/

[24] Palo Alto Networks Unit 42, “New Prompt Injection Attack Vectors Through MCP Sampling,” December 2025. https://unit42.paloaltonetworks.com/model-context-protocol-attack-vectors/

[25] Secure Privacy, “AI Risk & Compliance 2026: Enterprise Governance Overview.” https://secureprivacy.ai/blog/ai-risk-compliance-2026/

[26] Vectra, “AI Governance Tools: Selection and Security Guide for 2026.” https://www.vectra.ai/topics/ai-governance-tools/

[27] CIO, “Overcome Governance and Trust Issues to Drive Agentic AI,” December 2025. https://www.cio.com/article/4105490/overcome-governance-and-trust-issues-to-drive-agentic-ai.html